Essential Privacy and Regulatory Research at Your Fingertips

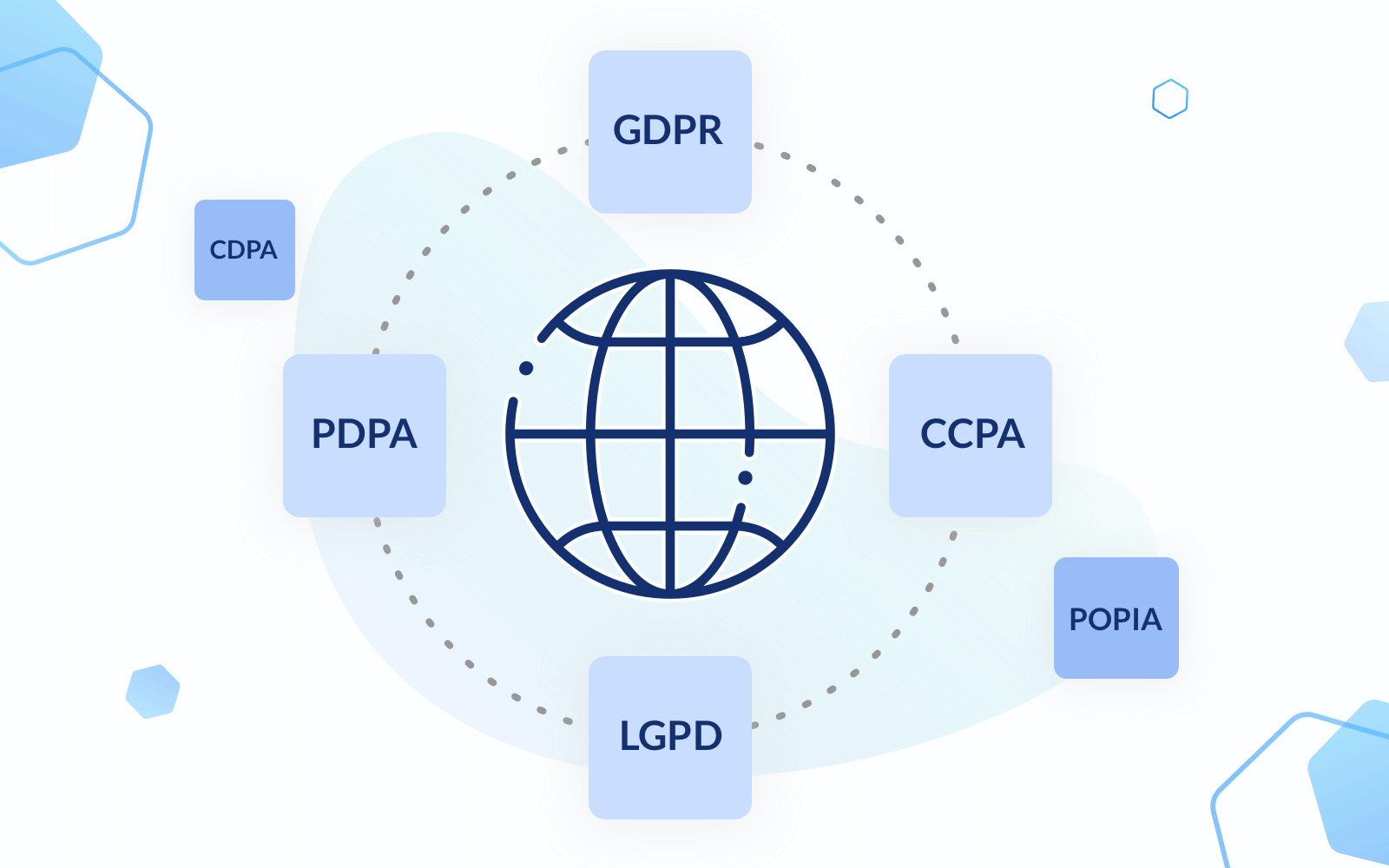

Find everything you need to stay up-to-date on evolving privacy & security regulations around the world

PORTAL

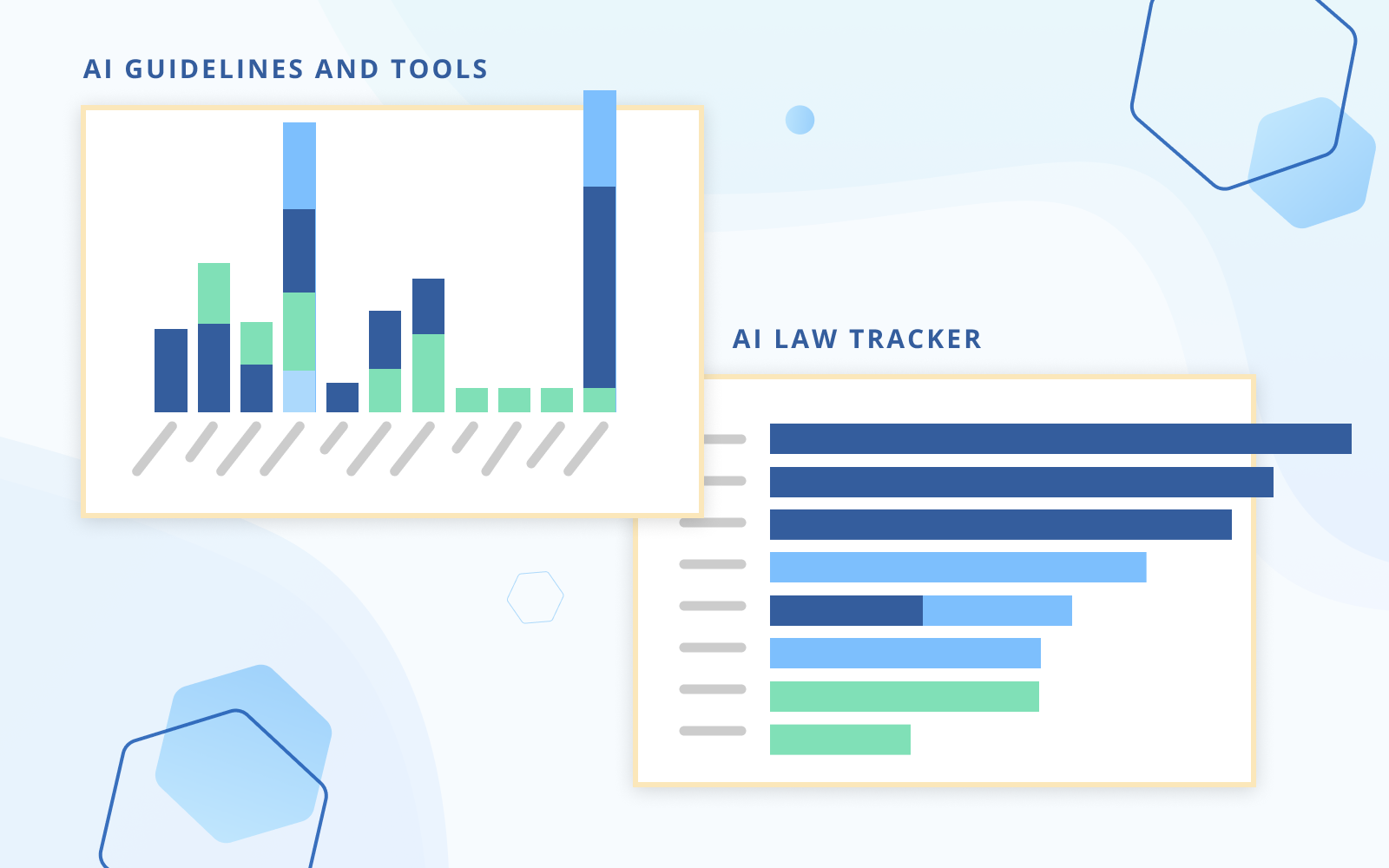

Artificial Intelligence

Stay up-to-date with the latest AI developments and track the status of global AI laws and frameworks.

Featured Laws

Featured Topics

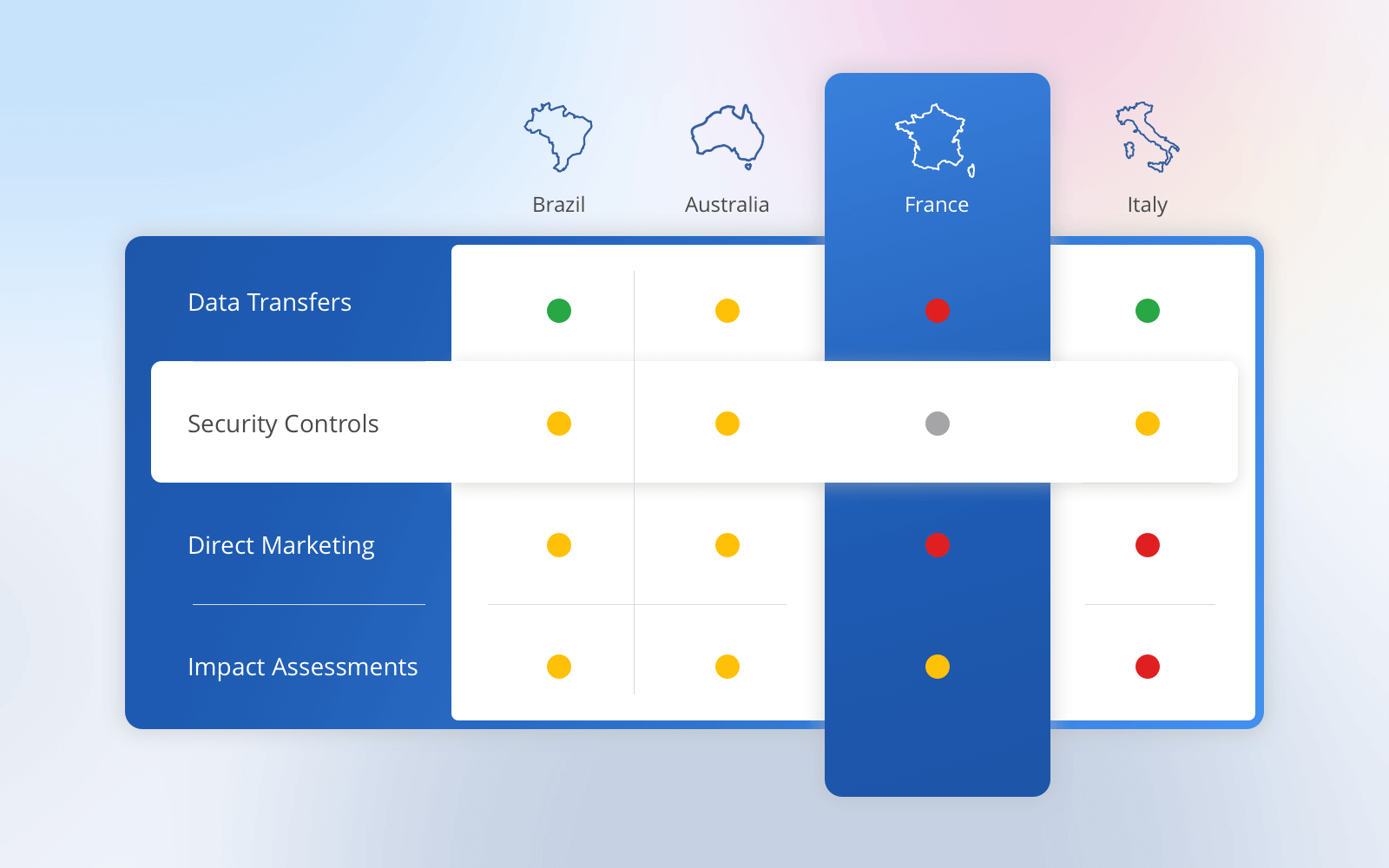

Jurisdictions

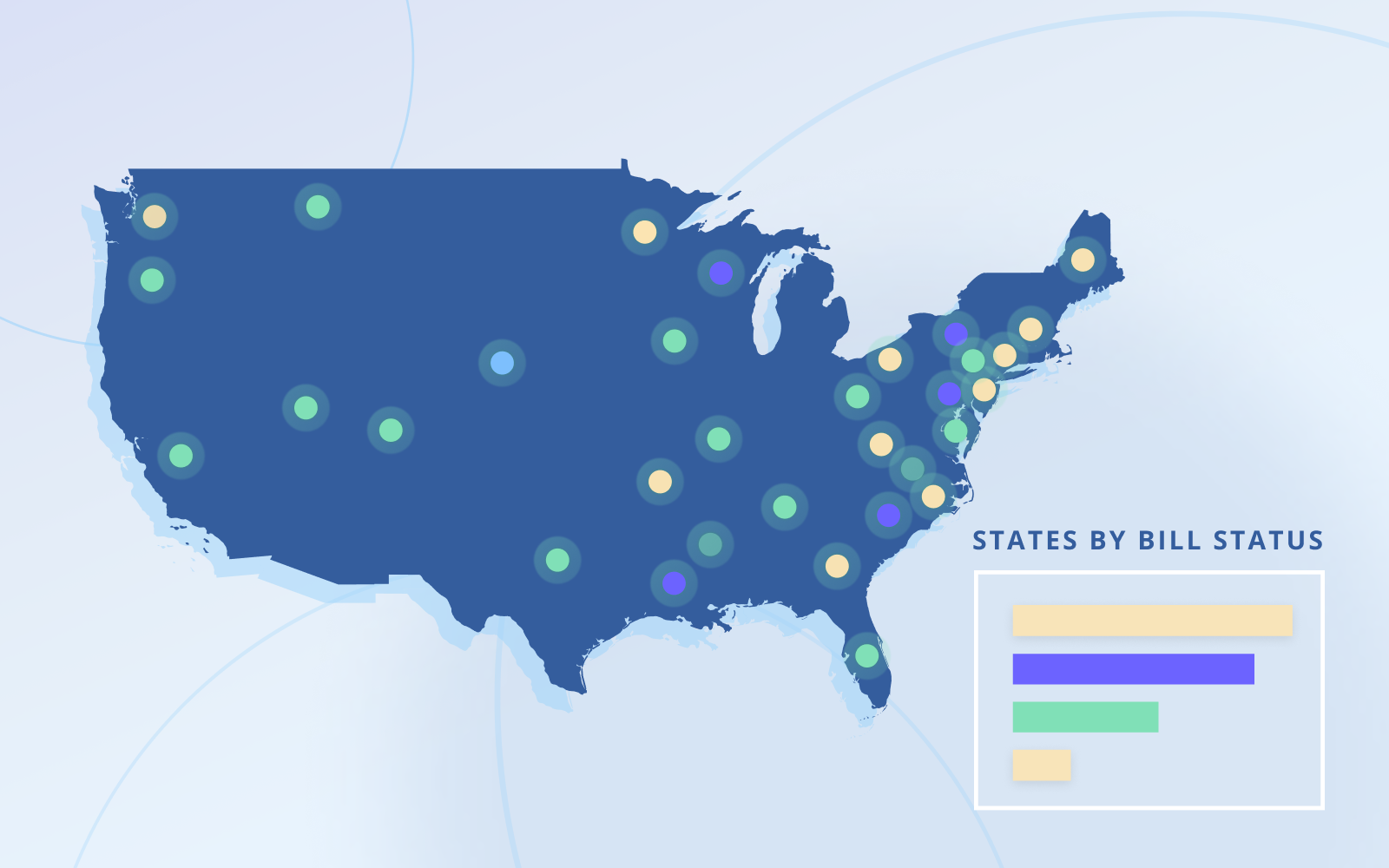

Click on a country below to dive into any privacy laws

Legend:

Privacy law

Draft privacy law

No privacy law